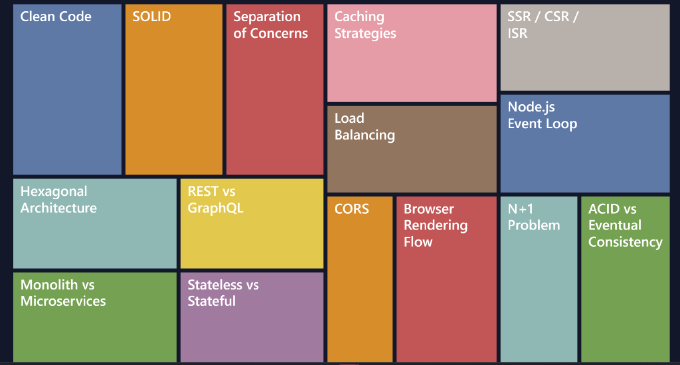

Must-Know Web Development Concepts in 2026

Software can be perfectly functional, secure, and even performant, yet still become a long-term problem. The real test of a system is not whether it works today, but whether it can survive tomorrow’s requirements. When code becomes difficult to modify, every small change risks breaking something unrelated. Features take longer to implement, bugs appear in unexpected places, and eventually even simple improvements feel dangerous.

Maintainability is what prevents this scenario. When a codebase is designed with maintainability in mind, it can evolve without constant fear of collapse.

Clean Code

One of the earliest and most influential discussions about writing maintainable code appears in the book Clean Code: A Handbook of Agile Software Craftsmanship by Robert C. Martin. The central idea is simple: code should be written for humans first and machines second. Computers do not care if a function is understandable, but the next developer who reads it certainly will. Clean code emphasizes clear naming, small functions, minimal complexity, and consistent structure. When code communicates its intent clearly, developers spend less time deciphering it and more time improving it. If you want a quick overview of the key ideas from the book, you can read this helpful summary:

https://cleancodeguy.com/blog/clean-code-robert-martin-book

SOLID

Beyond readability, maintainability is reinforced by a set of design principles commonly known as SOLID. These principles aim to reduce tight coupling and prevent the ripple effects that occur when one change breaks multiple unrelated parts of a system.

The first principle, Single Responsibility, suggests that a component should have only one reason to change. When a function or module handles multiple concerns, even a small modification may unintentionally affect other responsibilities.

Open/Closed encourages systems to be open for extension but closed for modification. Instead of constantly rewriting existing code, developers should be able to extend behavior through abstraction.

Liskov Substitution focuses on reliable inheritance. If one class replaces another, the application should continue working correctly without unexpected behavior.

Interface Segregation warns against forcing implementations to depend on methods they do not need. Smaller, focused interfaces make code easier to understand and adapt.

Finally, Dependency Inversion promotes depending on abstractions rather than concrete implementations. This reduces coupling and allows components to evolve independently.

While these principles encourage flexibility, they should not lead to unnecessary complexity. Overengineering is another common trap. Three practical ideas help prevent it:

- DRY (Don’t Repeat Yourself) reminds us to avoid duplicated logic, although abstraction should not be introduced prematurely.

- KISS (Keep It Simple, Stupid) encourages the simplest solution that solves the problem effectively.

- YAGNI (You Ain’t Gonna Need It) warns against building features “just in case” they might be needed someday. Most of the time, those hypothetical features never arrive.

Separation of Concerns

Another fundamental concept in software architecture is Separation of Concerns. The idea is that different parts of a system should handle different responsibilities without leaking into each other’s domains. When responsibilities are clearly separated, systems become easier to test, refactor, and extend.

In a typical backend application, this separation appears as layers. The controller layer acts as the entry point. It deals with HTTP requests, validates input, calls business logic, and returns responses. In frameworks such as Next.js, API routes or route handlers often serve this purpose.

// app/api/users/[id]/route.js

export async function GET(req, { params }) {

const user = await userService.getUser(params.id)

return Response.json(user)

}

The service layer contains the core business logic. This is where application rules and domain decisions live. Services coordinate actions, enforce validation rules, and orchestrate workflows.

// services/userService.js

export async function getUser(id) {

const user = await userRepository.findById(id)

if (!user) {

throw new UserNotFoundError()

}

return user

}

Below that sits the repository layer, responsible for interacting with the database. It hides the details of data storage and provides a consistent interface for retrieving and persisting data.

Finally, the infrastructure layer includes external systems such as database clients, caching systems like Redis, email providers, payment gateways, or file storage adapters.

What matters most is the direction of dependencies. Controllers may call services, services may call repositories, and repositories may use infrastructure components—but never the other way around. This ensures that business logic remains independent of frameworks and external systems.

If you’d like a quick refresher on the concept, this article provides a concise overview of Separation of Concerns:

https://www.geeksforgeeks.org/software-engineering/separation-of-concerns-soc/

Clean / Hexagonal Architecture

This idea leads naturally into Clean Architecture, sometimes referred to as Hexagonal Architecture. The core philosophy is that the most valuable part of a system — the domain logic — should not depend on frameworks, databases, or third-party services. Those technologies are interchangeable, while business logic is not.

Consider a typical API route that directly interacts with a database and an external payment service. In such a design, the routing layer becomes tightly coupled with implementation details. Testing the logic becomes difficult because it requires the full environment.

In contrast, a cleaner architecture delegates these responsibilities to separate layers. The route handler simply forwards the request to an application service. That service interacts with repositories and external adapters through abstractions. The business logic remains isolated from infrastructure concerns.

This approach protects complexity where it matters most. Framework code can be replaced relatively easily, but rewriting business logic is expensive and risky.

Let’s look at an example:

export async function POST(req) {

const body = await req.json()

await createUser(

new PrismaUserRepository(),

new StripeAdapter(),

body

)

return Response.json({ success: true })

}

In this example, the route handler only acts as an entry point for HTTP communication. It does not contain business rules, database queries, or payment logic. Instead, the core operation is delegated to the application layer through createUser.

The repositories and adapters are injected as abstractions rather than being hard-coded inside the route itself. This means that tomorrow you could replace Prisma with another database client, or swap Stripe with a different payment provider, without rewriting the business logic.

A detailed explanation of Hexagonal Architecture by its creator can be found here:

https://alistair.cockburn.us/hexagonal-architecture

You can also watch this practical explanation:

https://www.youtube.com/live/AOIWUPjal60?si=3BF00URql1Tor9FF

Monolith vs Microservices

Understanding architecture also means understanding system design choices. One of the most common decisions is between monolithic systems and microservices.

A monolith is a single deployable application. It is usually easier to develop and debug, especially in early stages. However, scaling specific components independently can be difficult.

Microservices divide the system into smaller services that can be deployed and scaled independently. This flexibility comes at the cost of increased complexity. Network communication, distributed failures, and service orchestration introduce new challenges.

REST vs GraphQL

API design is another important architectural consideration. REST has traditionally been the dominant approach, exposing resources through multiple endpoints such as /users/1 or /orders/42. It relies heavily on HTTP methods, status codes, and caching mechanisms.

GraphQL takes a different approach. Instead of multiple endpoints, clients interact with a single endpoint and specify exactly what data they need. This prevents the common REST problems of over-fetching and under-fetching, although poorly designed queries can become overly complex.

Stateless vs Stateful

Another design decision that affects scalability is whether the system is stateless or stateful.

In a stateless architecture, the server does not store client session data between requests. Each request contains everything necessary for processing, often in the form of authentication tokens such as JWTs. This model is ideal for cloud environments because any server instance can handle any request.

// Express.js

app.use((req, res, next) => {

const token = req.headers.authorization?.split(' ')[1]

const payload = jwt.verify(token, SECRET)

req.user = payload

next()

})

Stateful systems, on the other hand, store session information on the server. While this approach can simplify application logic, it complicates scaling because requests must reach the same server that holds the session.

// Express.js

app.use(session({

secret: 'secret',

resave: false,

saveUninitialized: false

}))

Modern cloud architectures tend to prefer stateless designs because they work naturally with container orchestration platforms, serverless deployments, and horizontal scaling.

Caching Strategies

Performance in distributed systems also depends heavily on caching. Caching is not a single mechanism but a layered strategy. Data can be cached in the browser, at the CDN edge, within reverse proxies like Nginx, inside the application itself using systems such as Redis, and sometimes even at the database level.

Each layer solves a different problem. Browser caching reduces repeated downloads of static assets. CDNs bring content closer to users geographically. Reverse proxies can cache entire responses from backend servers. Application-level caches avoid expensive database queries by storing frequently accessed data.

Different cache strategies determine how cached data interacts with the database. Cache-aside, the most common pattern, retrieves data from the cache first and falls back to the database on a miss. Write-through caching updates both the database and the cache simultaneously. Write-behind caching prioritizes performance by writing to the cache first and updating the database asynchronously.

In Next.js, caching can be controlled at the route handler level, edge runtime, or component rendering layer depending on the type of data you are serving.

For example, browser-level caching can be influenced by HTTP headers returned from route handlers:

export async function GET() {

const data = await fetchData()

return new Response(JSON.stringify(data), {

headers: {

"Cache-Control": "public, max-age=60"

}

})

}

This tells the browser that the response can be cached locally for 60 seconds, reducing repeated network requests.

As for cache-aside strategy, in Next.js applications, Redis is often used as the external cache layer:

const cached = await redis.get(`user:${id}`)

if (cached) return JSON.parse(cached)

const user = await db.user.findUnique({

where: { id }

})

await redis.set(

`user:${id}`,

JSON.stringify(user),

{ EX: 60 }

)

return user

Next.js also supports edge-level or ISR-style caching for server-rendered content. You can specify revalidation intervals to regenerate cached responses in the background:

export const revalidate = 60

export async function GET() {

const data = await generatePageData()

return Response.json(data)

}

This approach is particularly useful for content that does not change frequently, such as public pages or aggregated API responses, because it balances freshness and performance while minimizing server load.

Load Balancing

As systems scale further, load balancing becomes necessary. Instead of a single server handling all requests, traffic is distributed across multiple servers by a load balancer such as HAProxy, Nginx, or cloud-based services.

This improves throughput and resilience, but it also reinforces the need for stateless design. Any request may reach any server instance, so relying on in-memory state becomes unreliable.

SSR / CSR / ISR

On the frontend side, rendering strategies also influence performance and scalability. Client-side rendering (CSR) builds the UI entirely in the browser using JavaScript. Server-side rendering (SSR) generates HTML on each request. Incremental Static Regeneration (ISR), a feature of Next.js, generates static pages that can be refreshed periodically in the background. Each approach offers trade-offs between performance, freshness, and server load.

ISR Rendering Flow

Node.js Event Loop

Developers working with Node.js should also understand the basics of its event loop. Node runs JavaScript on a single thread but handles concurrency through asynchronous operations. Database queries, file operations, and network calls are delegated to the system and processed in the background. When they complete, callbacks are returned to the event loop. This allows Node.js to handle many simultaneous requests efficiently, as long as developers avoid CPU-intensive synchronous tasks that block the event loop.

HTTP Methods and Status Codes

Web development fundamentals remain equally important. HTTP methods define how resources are manipulated, and status codes communicate the result of each request.

HTTP methods

- GET → retrieve data (idempotent)

- POST → create a new resource

- PUT → replace an existing resource

- PATCH → partially update a resource

- DELETE → remove a resource

Common HTTP status codes

- 200 – OK — request succeeded

- 201 – Created — resource successfully created

- 204 – No Content — success with no response body

- 301 / 302 – Redirect

- 400 – Bad Request — client error

- 401 – Unauthenticated

- 403 – Forbidden

- 404 – Not Found

- 500 – Internal Server Error

Understanding these basics helps design predictable and REST-friendly APIs.

CORS (Cross-Origin Resource Sharing)

Another concept every web developer encounters quickly is CORS (Cross-Origin Resource Sharing). CORS is a browser security mechanism that prevents a website from making requests to another origin unless the server explicitly allows it. A key detail that is sometimes misunderstood is that CORS is enforced by browsers, not by servers. Server-to-server communication is not restricted by CORS at all.

In a Next.js route handler, allowing cross-origin requests usually means returning the proper headers in the response. For example:

export async function GET(req: Request) {

return new Response("ok", {

headers: {

"Access-Control-Allow-Origin": "https://frontend.com"

}

})

}

// For preflight requests

export async function OPTIONS() {

return new Response(null, {

headers: {

"Access-Control-Allow-Origin": "https://frontend.com",

"Access-Control-Allow-Methods": "GET,POST,OPTIONS",

"Access-Control-Allow-Headers": "Content-Type, Authorization"

}

})

}

In practice, many teams avoid CORS issues altogether by routing external API calls through their own backend or reverse proxy. Instead of calling a third-party API directly from the browser the frontend calls its own API endpoint first, which is then proxied to the external service. With Nginx this can look like:

location /api/ {

proxy_pass https://api.service.com;

proxy_http_version 1.1;

proxy_ssl_server_name on;

}

This approach keeps the browser within the same origin and also gives the backend an opportunity to add authentication, caching, or logging.

Browser Rendering Flow

Another important area is understanding how browsers actually render a page. When a page loads, the browser first downloads the HTML and builds the DOM tree. While doing so, it may pause if it encounters blocking scripts. CSS is parsed separately into a CSSOM tree, and since layout depends on styles, CSS is considered render-blocking. The browser then combines the DOM and CSSOM into a render tree that contains only the visible elements. After that it calculates layout, determining the position and size of each element. This step is sometimes called reflow and can become expensive if triggered repeatedly. Once layout is known, the browser paints pixels such as text, colors, borders, and shadows. Finally, layers are composited together, often with help from the GPU, especially when properties like transform or opacity are used.

For developers this mainly translates into a few practical rules: avoid repeatedly forcing layout calculations, prefer transform and opacity for animations, be aware of render-blocking resources such as synchronous JavaScript or large CSS files, and use script attributes like defer or async when appropriate. In Next.js specifically, performance can often be improved by using the built-in <Image /> component to prevent layout shifts, by loading heavy components with dynamic imports, and by using server rendering strategies like SSR or ISR to reduce the amount of work the browser has to do during hydration.

On the database side, most low-level fundamentals such as indexing and query optimization have not changed much for decades, but there are still some architectural concepts that developers frequently encounter.

N+1 problem

One of them is the N+1 query problem. This happens when the application first fetches a list of records and then performs an additional query for each individual item to retrieve related data. A simple example might look like this:

const posts = await db.post.findMany() // 1 query

for (const post of posts) {

post.comments = await db.comment.findMany({

where: { postId: post.id }

}) // N queries

}

If there are 100 posts, the application ends up executing 101 queries. This pattern can quickly become a serious performance problem. The usual solutions involve loading related data in a single query using joins or eager loading, batching queries with WHERE IN (...), or using a batching mechanism such as the DataLoader pattern commonly seen in GraphQL APIs.

ACID vs Eventual Consistency

Another concept that becomes important when working with modern systems is the difference between ACID guarantees and eventual consistency. Traditional relational databases typically follow the ACID model. Atomicity means a transaction either fully succeeds or fully fails. Consistency ensures that database constraints remain valid. Isolation prevents concurrent transactions from interfering with one another, and durability guarantees that once data is committed it will not disappear even if the system crashes. A classic example is a bank transfer: subtracting money from one account and adding it to another must either both succeed or both fail.

In distributed systems and many NoSQL databases the model is often different. Instead of guaranteeing immediate consistency, they follow eventual consistency. In such systems data may temporarily differ between replicas, but over time all nodes converge to the same state. For example, if a user updates their profile picture, some regions might still show the old image for a short time until synchronization finishes. This temporary inconsistency is usually acceptable in exchange for better scalability and global availability.

Recap

Building robust systems requires understanding all these concepts together. Clean code practices keep the codebase readable. Architectural patterns protect business logic from external dependencies. Proper system design enables scalability and resilience.

Ultimately, maintainable software is not just about writing code that works today. It is about creating systems that can continue evolving long after the original developers have moved on.